Cutting through the bull: AI slop and MIL

A recent paper by the Make America Healthy Again commission appeared to be meticulously documented with footnotes and citations, part of the "radical transparency" the US Government has been promising. And yet, it turns out, many of its citations were AI hallucinations.

Hallucinations are common in Large Language Models, where the models, designed as text predictors, produce fake references or answer questions incorrectly but with certitude. Although some researchers use a blunter term: bullshit.

"Among philosophers, ‘bullshit’ has a specialist meaning," an article in Scientific American explains. "When someone bullshits, they’re not telling the truth, but they’re also not really lying."

According to the authors, Generative AI bullshits because it is indifferent to accuracy. For DW Akademie specialists and researchers, this means there is an even greater need for Media and Information Literacy (MIL) skills.

The sloppy side of AI

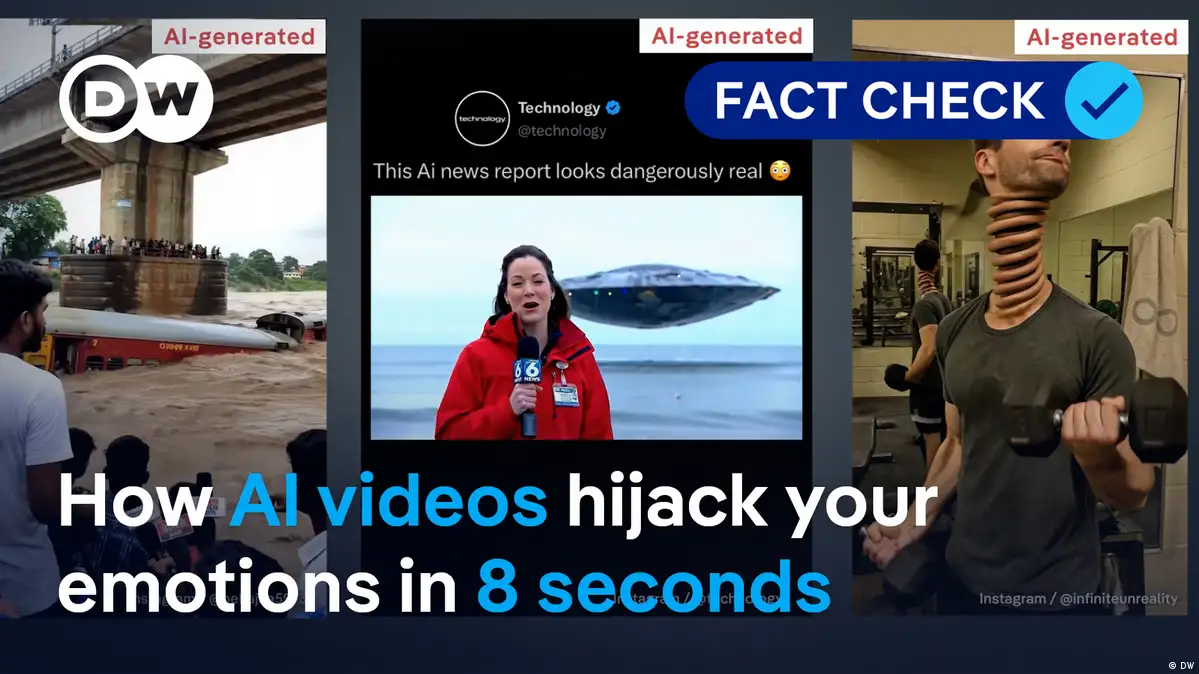

A recent study found that more than 50% of new web pages are now created by AI, and some are published with nothing more than a prompt. Plus, with new video and image creation tools, even more content can be made almost instantly.

This low-quality content is called AI slop. And so, with AI’s tendency toward hallucinations and the creation of misinformation, how can Media and Information Literacy skills be adjusted to sift through it all?

Roslyn Kratochvil Moore leads a team working with MIL at DW Akademie. When asked if the amount of AI slop will upend MIL skills or make media literacy obsolete, Moore did not mince words.

"Not remotely," she responded. "What we are dealing with is a changed environment, where everything is enhanced, for good and bad."

She pointed out that, while things like deep fakes have become much more believable, the ability to check and counter them have become easier as well.

"The theme that lies at the center of media literacy remains," Moore said. "And that’s critical thinking."

Where disinformation thrives

Steffen Leidel, a senior consultant with DW Akademie who leads the organization's community of practice on AI, said that some of the skills emphasized at recent MIL trainings already must move to the wayside. "The days of detecting AI by counting fingers, looking for weird lighting, hands or faces are almost over," he stated.

In fact, according to the Rethinking Media Literacy 2025 report from the World Economic Forum (WEF), by age 11, children’s ability to discern AI-generated content is far exceeded by their confidence. Training AI identification can then potentially backfire if it creates false certainty.

This gap between perception and reality is right where disinformation actors thrive. Combined with tools to create almost infinite content, producers have the chance to pollute the information landscape.

"Slopaganda"

The new type of propaganda, or "slopaganda," creates new problems, with similar results. Leidel describes it as "an endless stream of cheaply generated, emotionally charged AI content flooding spaces and eroding trust."

The use of AI slop then stands as a way to not necessarily convince someone that the disinformation is real, but to stoke divisions and weaken online spaces.

And, in the end, it can have the same effect: "Disinformation disrupts the ability of individuals to freely make informed choices about what is in their own best interests," the WEF report states.

Digital security to the fore

Moore, who together with her colleagues works on MIL for young people, points out that the types of skills that underpin MIL still hold: check sources, check bias and stop and think before reacting, responding or sharing. At the same time, studies show that MIL must keep up with the shifting online reality.

"For example, digital security and privacy was a side element of our work in the past," Moore said. "Now, it is a central tenet of our MIL focus."

In particular, online scams have become more common with an increased level of sophistication. Students and teachers have to learn to be aware.

Moore and her team have been working with organizations such as PYALARA in the West Bank and MiLLi in Namibia. They have developed the "Heroes and Villains Guidebook," which has already been in print since 2023 and has been used in classrooms, workshops, youth clubs and in rural community settings around the world. It will soon get an AI supplement, which is being workshopped at this week’s UNESCO Global MIL Week in Colombia.

A dangerous half-knowledge

"Another hurdle is getting teachers to teach something where they might not be the expert in the room," Moore explained, with many students potentially knowing more about AI than educators.

"Students are highly clever and are often very advanced in using AI tools," Moore said. "But the challenge is how to break down this complex technology to ensure basic understanding of AI while promoting responsible, informed, consensual use that encourages critical thinking."

And the challenges within different regions can significantly affect what MIL topics should to be highlighted. Needs in the West Bank, for example, which suffers from high levels of emotionally manipulative disinformation differ from Namibia, where sharing personal information is a major concern.

The limits of MIL

Still, MIL experts note the struggle is still real.

"Online spaces are a shared societal endeavor that must start with education and empowerment," says the WEF report, although it goes on to explain that empowerment and education are only parts of the puzzle that must be tied with better regulation and AI documentation.

But at least with MIL skills, the next generation will have a fighting chance to cut through the bullshit.